Introduction

Artificial intelligence (AI) is an interdisciplinary field that integrates computer science, cybernetics, neurophysiology, psychology, and more. Its core is to simulate human perception, reasoning, decision-making, and learning capabilities, extending and enhancing natural intelligence.

From the vague conception of intelligent machines to the deep integration of AI into daily life, this journey has been fraught with challenges and twists, marked by both groundbreaking advancements and periods of stagnation. Generations of researchers have persevered and explored, driving the technology forward to a new stage of coexistence with humanity.

This article will outline the complete development trajectory of artificial intelligence, analyzing the technological breakthroughs and limitations of each period, and restoring this grand history of technological evolution.

1. The Birth of AI: The Rise of Symbolism (1940s-1960s)

This phase centers on the initial exploration of symbolism, marking the formal birth of artificial intelligence. Key milestones include:

- 1913: Russell’s “Principia Mathematica” published, establishing a rigorous mathematical logic system that lays the theoretical foundation for machine simulation of human reasoning.

- 1943: McCulloch and Pitts propose the concept of artificial neural networks, creating the MP model of artificial neurons, initiating the era of artificial neural network research.

- 1946: The world’s first digital electronic computer is born, providing core hardware support for artificial intelligence.

- 1949: Hebb proposes the Hebbian learning law, revealing the mechanism of cooperative work among brain neurons, offering significant inspiration for machine learning.

- 1950: Turing introduces the Turing Test in “Computing Machinery and Intelligence,” establishing standards for determining machine intelligence, and develops the first AI chess program.

- 1956: The Dartmouth Conference is held, where McCarthy first proposes the concept of “artificial intelligence,” marking the official birth of AI. That same year, Newell, Simon, and Shaw successfully develop the “Logic Theorist,” while Samuel creates a checkers program capable of self-learning.

- 1958: McCarthy invents the LISP programming language, which becomes a core tool for early AI development.

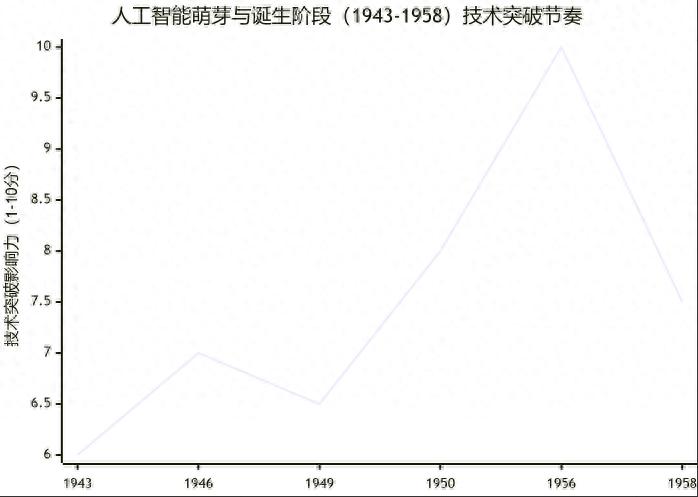

This phase shows a gradual upward trend in technological development, with core achievements concentrated in theoretical foundations and initial practices. The following line chart visually represents the rhythm of technological breakthroughs in this phase:

Despite a series of breakthroughs during this period, technological limitations gradually became apparent. Early AI systems were highly dependent on manually written logical rules, lacking the ability to learn autonomously and adapt to complex environments. They could only handle simple problems in specific domains and were unable to cope with the uncertainties and complex semantics of the real world.

For instance, the checkers program could only handle that single scenario and could not transfer to other gaming contexts; the Logic Theorist could only prove simple mathematical theorems and was difficult to apply in real-life situations. Additionally, the limited memory and processing speed of computers at that time could not support complex intelligent algorithms or build extensive knowledge bases to enable programs to learn richer knowledge.

These limitations led to a bottleneck in early AI development, widening the gap between high expectations for AI and its actual application effectiveness, setting the stage for the first AI winter.

2. The First Boom and Stagnation: The Rise and Fall of Expert Systems (1970s-Early 1980s)

This phase focuses on the rise and prosperity of expert systems, followed by a decline due to technological limitations, with key milestones including:

- 1966-1972: The Stanford Research Institute develops the first AI mobile robot, Shakey, capable of autonomously perceiving its environment and planning its route. During this period, MIT’s Weizenbaum releases the world’s first chatbot, ELIZA.

- 1968: Feigenbaum successfully develops the first expert system, DENDRAL, which can determine the molecular structure of organic compounds based on mass spectrometry data, marking the birth of the expert systems branch.

- 1971: Stanford University’s Shortliffe and others begin developing the medical expert system MYCIN, using a “knowledge base + inference engine” structure and introducing the concept of “confidence” for non-deterministic reasoning.

- 1976: Experts at Stanford Research Institute, led by Duda, initiate the development of a geological exploration expert system, successfully completing it in 1981, sparking an AI “gold rush.”

- Late 1970s-Early 1980s: Businesses invest heavily in the intelligent industry, and expert systems are widely applied. However, technological limitations become increasingly evident, leading funding agencies to cease support, and AI enters its first period of stagnation.

The limitations behind the prosperity of expert systems became apparent, ultimately leading to the first AI winter. The expert systems faced significant challenges:

- Knowledge acquisition was difficult; the performance of expert systems relied heavily on the input of domain experts, and the process of manually extracting and organizing expert knowledge was cumbersome and inefficient, making it hard to cover all knowledge in complex fields.

- Poor generalizability; each expert system could only address specific domain problems, unable to apply across fields and adapt to environmental changes. Once faced with issues beyond the knowledge base, they would fail.

- Insufficient hardware support; the limited memory and processing speed of computers at that time could not support large-scale knowledge bases and complex reasoning algorithms, leading to low operational efficiency of expert systems.

Moreover, the high expectations for AI were not met, leading funding agencies like the UK government and the U.S. Department of Defense’s ARPA to gradually stop funding AI research. After allocating $20 million, the National Science Foundation also ceased funding, causing a significant decline in scientific and commercial activities related to AI, leading to nearly 20 years of stagnation starting from the 1970s.

3. The Second Boom and Stagnation: The Revival and Setbacks of Connectionism (1980s-Early 1990s)

This phase centers on the revival of connectionism, with breakthroughs in multilayer neural networks, followed by another period of stagnation. Key milestones include:

- 1981: Japan’s Ministry of International Trade and Industry allocates $850 million to initiate the Fifth Generation Computer Project, aiming to develop intelligent computers with autonomous learning, reasoning, and decision-making capabilities, sparking global technological competition.

- 1984: Douglas Lenat launches the Cyc project, aiming to build a vast common-sense knowledge base to advance AI towards general intelligence.

- 1986: Rumelhart, Hinton, and others publish a paper in “Nature” proposing multilayer neural networks and the backpropagation (BP) algorithm, reviving research on artificial neural networks.

- Late 1980s: Nearly half of the Fortune 500 companies develop or use expert systems, widely applied in industrial manufacturing, finance, and healthcare.

- 1987-1993: Connectionism faces bottlenecks in training efficiency and overfitting, while the drawbacks of expert systems become more pronounced, leading to reduced funding and the onset of the second AI winter.

However, this boom did not last long, as technological limitations and market changes once again led to stagnation in AI.

On one hand, the development of connectionism faced bottlenecks; although multilayer neural networks addressed some learning issues, they suffered from low training efficiency and overfitting, and were limited by insufficient data to achieve complex intelligent tasks.

On the other hand, the drawbacks of expert systems became increasingly evident. Their high software and hardware costs and limited practicality could not meet market demands. By the late 1980s, companies like Apple and IBM began promoting the first generation of personal computers, which were far cheaper than the costs associated with expert systems, leading businesses to gradually abandon expert systems in favor of more practical computer applications.

Additionally, the new leadership at the U.S. Department of Defense’s ARPA believed AI was not the “next wave” and shifted funding to projects with more immediate results, further exacerbating the decline of AI. Starting in 1987, AI entered its second winter, lasting until 1993.

4. Recovery and Breakthrough: The Rise of Statistical Learning and Deep Learning (1990s-2010s)

This phase centers on the maturation of statistical learning and breakthroughs in deep learning, leading to a gradual recovery and rapid development of AI. Key milestones include:

- 1995: The soft-margin nonlinear SVM (Support Vector Machine) is proposed, marking the maturity of statistical learning theory, widely applied in text classification and image recognition.

- May 1997: IBM’s “Deep Blue” supercomputer defeats world chess champion Garry Kasparov, becoming the first computer system to defeat a player of that caliber within standard time limits, advancing the field of machine gaming.

- 2002: iRobot launches the Roomba vacuuming robot, an early representative of home AI products, capable of autonomous obstacle avoidance, route planning, and self-charging.

- 2011: IBM Watson defeats two human champions in a U.S. quiz show, showcasing breakthroughs in natural language processing and knowledge retrieval.

- 2012: A team of Canadian neuroscientists creates a virtual brain, Spaun, which passes basic IQ tests; Krizhevsky et al. publish a paper at NIPS 2012 proposing AlexNet, significantly improving image recognition accuracy and propelling the rise of deep learning.

- 2013: Facebook establishes an AI research lab, Google acquires DNNResearch, and Baidu creates a deep learning research institute, driving the iteration of deep learning technology.

- 2014: The chatbot “Eugene Goostman” passes the Turing Test, marking a significant breakthrough in natural language interaction.

- 2017: The Google team, including Ashish Vaswani, publishes the paper “Attention Is All You Need” at NeurIPS 2017, proposing the Transformer architecture based on self-attention mechanisms, fundamentally disrupting traditional recurrent neural networks (RNNs) and long short-term memory networks (LSTMs), significantly enhancing model parallelization and long-distance dependency handling, laying the crucial foundation for subsequent models like GPT and BERT.

The later part of this phase sees the emergence of the Transformer architecture as a critical turning point in the evolution of deep learning towards large models.

The technological breakthroughs of this period addressed many of the previous limitations of AI. The accumulation of data, enhancement of computational power, and innovation of algorithms enabled AI to handle more complex tasks, significantly improving generalization and adaptability.

The publication of the Transformer paper in 2017 was a milestone, as its proposed self-attention mechanism does not rely on traditional recurrent or convolutional structures, allowing it to focus on all positions in the input sequence simultaneously. This not only improves model training efficiency but also paves the way for the development of large-scale language models, becoming the “standard building block” for modern large models, with nearly all cutting-edge generative AI systems evolving based on this architecture.

However, limitations still exist in this phase: deep learning requires a large amount of labeled data, and the costs of data acquisition and labeling are high; the interpretability of algorithms is poor, making it difficult to explain the specific logic behind AI decisions, often referred to as “black box models”; furthermore, the application scope of AI remains limited to specific domains, failing to achieve general intelligence and unable to flexibly respond to various complex scenarios like humans.

5. Explosion and Coexistence: The Arrival of the Large Model Era (2020-Present)

This phase centers on the rise of large models, with AI entering an explosive development phase, moving towards general intelligence. Key milestones include:

- 2020: Brown et al. publish a paper at NeurIPS 2020 proposing the GPT-3 model with 175 billion parameters, showcasing emergent capabilities like contextual learning and driving the commercialization of generative AI (iterating based on the Transformer architecture).

- 2021: AlphaFold 2 accurately predicts 98.5% of human protein structures, ushering in a new era in computational biology.

- 2022: ChatGPT achieves large-scale application, with over a million daily registered users, pushing generative AI into mainstream scenarios (optimized based on the Transformer architecture).

- 2023: The GPT-4V multimodal large model is released, supporting joint reasoning of images and text, enhancing AI’s perception and understanding capabilities (continuing the core architecture of Transformer).

- 2025: Over 6,000 AI companies in China, with the core industry scale expected to exceed 1.2 trillion yuan; the series of national standards for “Artificial Intelligence Large Models” is officially implemented, regulating industry development.

Despite the unprecedented breakthroughs in AI during the large model era, numerous challenges remain.

Ethical issues are increasingly prominent, with the authenticity of generated content difficult to guarantee, and algorithmic biases potentially exacerbating social injustice; the training of large models requires immense computational resources and energy consumption, posing environmental pressures.

Moreover, general artificial intelligence (AGI) has yet to be achieved. Although large models exhibit strong emergent capabilities, there remains a significant gap in aspects such as self-awareness and emotional understanding compared to human intelligence, unable to fully simulate the complex thinking and behavior of humans. It is essential to emphasize that the explosive development of modern large models is fundamentally attributed to the breakthrough of the Transformer architecture in 2017, whose self-attention mechanism provides crucial support for parallel training of large models, long text processing, and efficient learning, becoming the basic standard for large model development.

The series of national standards for “Artificial Intelligence Large Models,” led by the China Electronics Standardization Institute, has been officially implemented, providing unified regulations for the development of the large model industry and promoting healthy and orderly industry growth.

Conclusion

Looking back at the development of artificial intelligence, from the initial exploration of symbolism to the revival of connectionism, from the rise of statistical learning to the explosion of large models, each breakthrough has relied on the perseverance and innovation of researchers, and each period of stagnation has accumulated experience for subsequent leaps. Notably, the publication of the Transformer paper in 2017 was a critical turning point, as its foundational architecture directly propelled AI from the deep learning stage into the large model era, becoming the core technical standard for modern large models. The development of AI is, in essence, humanity’s exploration and simulation of its own intelligence, during which the boundaries between humans and machines gradually blur.

Machines are increasingly resembling humans because AI continuously simulates human perception, reasoning, learning, and even emotional expression capabilities. Today’s large models can understand human language, recognize human emotions, autonomously learn new knowledge, solve complex problems, and even demonstrate capabilities that surpass humans in certain areas. Deep learning pioneer Geoffrey Hinton has pointed out that the way humans and large language models understand language is almost the same, and machines can also generate “hallucinations” like humans. This perspective reveals the deep connection between machine intelligence and human intelligence. From the simple language interactions of ELIZA to the natural conversations of ChatGPT; from Deep Blue’s chess games to AlphaGo’s victory in Go, machines are gradually approaching human intelligence levels, even surpassing it in certain aspects.

This trend of mutual approach and integration is not a confrontation between humans and machines, but the beginning of coexistence and integration. As Academician Li Deyi proposed, human intelligence and machine intelligence are “physically homologous and mathematically isomorphic”; both systems absorb information to resist entropy increase and maintain their own order. Human advantages lie in embodied exploration and reasoning decision-making, while machines excel in high-speed modeling and data processing. The deep integration of both will create a more efficient collaborative whole, driving human civilization to evolve to higher levels. The future development of artificial intelligence will still be full of challenges, but it is certain that the coexistence and integration of humans and machines will become the ultimate direction of AI development, reshaping the future of humanity.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.